Published by Ponemon Institute LLC, December 2013.

Ponemon Institute© Research Report is Sponsored by Emerson Network Power.

Part 1. Executive Summary

Ponemon Institute and Emerson Network Power are pleased to present the results of the 2013 Cost of Data Center Outages. First conducted in 2010, the purpose of this benchmark study is to determine the full economic cost of unplanned data center outages and is the second study in a two-part research series on the topic of data center outages.

The first study, 2013 Study on Data Center Outages, was released in September 2013 and was conducted to determine the frequency and root causes of unplanned data center outages. We believe both studies are important because of evidence that IT leaders are underestimating the economic impact unplanned outages have on their operations.

The 2013 Cost of Data Center Outages is the only benchmark study that attempts to estimate the full costs associated with an unplanned data center outage. According to the study, the cost of a data center outage has increased since 2010. The cost per square foot of data center outages now ranges from $45 to $95. Or, a minimum cost of $74,223 to a maximum of $1,734,433 per organization in our study. The overall average cost is $627,418 per incident. This benchmark analysis focuses on representative samples of organizations in the U.S. that experienced at least one complete or partial unplanned data center outage during the past 12months. The analysis was based on 67 independent data centers located in the United States.

Following are the functional leaders within each organization who participated in the study:

- Facility manager

- Chief information officer

- Data center management

- Chief information security officer

- IT compliance leader

Utilizing activity-based costing, our methods capture information about both direct and indirect costs, including but not limited to the following areas:

- Damage to mission critical data

- Impact of downtime on organizational productivity

- Damages to equipment and other assets

- Cost to detect and remediate systems and core business processes

- Legal and regulatory impact, including litigation defense cost

- Lost confidence and trust among key stakeholders

- Diminishment of marketplace brand and reputation

Following are some of the key findings of our benchmark research involving 67 data centers located throughout the nation.

- Total cost of partial and complete outages can be a significant expense for organizations.

- Total cost of outages is systematically related to the duration of the outage.

- Total cost of outages is systematically related to the size of the data center.

- Certain causes of the outage are more expensive than others. Specifically, IT equipment failure is the most expensive root cause. Accidental/human error is least expensive.

Part 2. Cost Framework

Utilizing activity-based costing, our study addresses nine core process-related activities that drive a range of expenditures associated with a company’s response to a data outage. The activities and cost centers used in our analysis are defined as follows:

- Detection cost: Activities associated with the initial discovery and subsequent investigation of the partial or complete outage incident.

- Containment cost: Activities and associated costs that enable a company to reasonably prevent an outage from spreading, worsening or causing greater disruption.

- Recovery cost: Activities and associated costs that relate to bringing the organization’s networks and core systems back to a state of readiness.

- Ex-post response cost: All after-the-fact incidental costs associated with business disruption and recovery.

- Equipment cost: The cost of equipment new purchases and repairs, including refurbishment.

- IT productivity loss: The lost time and related expenses associated with IT personnel downtime.

- User productivity loss: The lost time and related expenses associated with end-user downtime.

- Third-party cost: The cost of contractors, consultants, auditors and other specialists engaged to help resolve unplanned outages.

In addition to the above process-related activities, most companies experience opportunity costs associated with the data outage, which results in lost revenue, business disruption and average contribution. Accordingly, our cost framework includes the following categories:

- Lost revenues: The total revenue loss from customers and potential customers because of their inability to access core systems during the outage period.

- Business disruption (consequences): The total economic loss of the outage including reputational damages, customer churn and lost business opportunities.

Figure 1 presents the activity-based costing framework used in this research, which consists of 10 discernible categories. As shown, the four internal activities or cost centers include detection, containment, recovery, and ex-post response. Each activity generates direct, indirect, and opportunity costs, respectively. The consequence of the unplanned data center outage includes equipment repair or replacement, IT productivity loss, end-user productivity loss, third parties (such as consultants), lost revenues and the overall disruption to core business processes. Taken together, we then infer the cost of an unplanned data center outage.

Figure 1: Activity-based cost account framework

Part 3. Benchmark Methods

Our benchmark instrument was designed to collect descriptive information from IT practitioners and managers of data center facilities about the costs incurred either directly or indirectly as a result of unplanned outages. The survey design relies upon a shadow costing method used in applied economic research. This method does not require subjects to provide actual accounting results, but instead relies on broad estimates based on the experience of individuals within participating organizations.

The benchmark framework in Figure 1 presents the two separate cost streams used to measure the total cost of an unplanned outage for each participating organization. These two cost streams pertain to internal activities and the external consequences experienced by organizations during or after experiencing an incident. Our benchmark methodology contains questions designed to elicit the actual experiences and consequences of each incident. This cost study is unique in addressing the core systems and business process-related activities that drive a range of expenditures associated with a company’s incident management response.

Within each category, cost estimation is a two-stage process. First, the survey requires individuals to provide direct cost estimates for each cost category by checking a range variable. A range variable is used rather than a point estimate to preserve confidentiality (in order to ensure a higher response rate). Second, the survey requires participants to provide a second estimate for both indirect cost and opportunity cost, separately. These estimates are calculated based on the relative magnitude of these costs in comparison to a direct cost within a given category. Finally, we conduct a follow-up interview to obtain additional facts, including estimated revenue losses as a result of the outage.

The size and scope of survey items is limited to known cost categories that cut across different industry sectors. In our experience, a survey focusing on process yields a higher response rate and better quality of results. We also use a paper instrument, rather than an electronic survey, to provide greater assurances of confidentiality.

In total, the benchmark instrument contains descriptive costs for each one of the five cost activity centers. Within each cost activity center, the survey requires respondents to estimate the cost range to signify direct cost, indirect cost and opportunity cost, defined as follows:

- Direct cost – the direct expense outlay to accomplish a given activity.

- Indirect cost – the amount of time, effort and other organizational resources spent, but not as a direct cash outlay.

- Opportunity cost – the cost resulting from lost business opportunities as a consequence of reputation diminishment after the outage.

To maintain complete confidentiality, the survey instrument does not capture company-specific information of any kind. Research materials do not contain tracking codes or other methods that could link responses to participating companies.

To keep the benchmark instrument to a manageable size, we carefully limited items to only those cost activities we consider crucial to the measurement of data center outage costs. Based on discussions with learned experts, the final set of items focus on a finite set of direct or indirect cost activities. After collecting benchmark information, each instrument is examined carefully for consistency and completeness. In this study, four companies were rejected because of incomplete, inconsistent, or blank responses.

The study was launched in July 2013 and fieldwork concluded in October 2013. The recruitment started with a personalized letter and a follow-up phone call to 563 US-based organizations for possible participation in our study.¹This resulted in 83 organizations agreeing to participate. Fifty organizations (67 separate data centers) permitted researchers to complete the benchmark analysis.

Three cases were removed for reliability concerns and two cases were removed because those data centers fell below the minimum size requirement. Utilizing activity-based costing methods, we captured cost estimates using a standardized instrument for direct and indirect cost categories. Specifically, labor (productivity) and overhead costs were allocated to four internal activity centers and these flow through to six cost consequence categories (see Figure 1).

Total costs were then allocated to only one (the most recent) data center outage experienced by each organization. We collected information over approximately the same time frame; hence, this limits our ability to gauge seasonal variation on the total cost of an unplanned data center outage.

¹The US-based companies contacted are all members of Ponemon Institute’s benchmark community. Most of these are organizations that have utilized the Institute’s benchmarking services for cost analysis over the past 12 years.

Part 4. Sample of Participating Companies & Data Centers

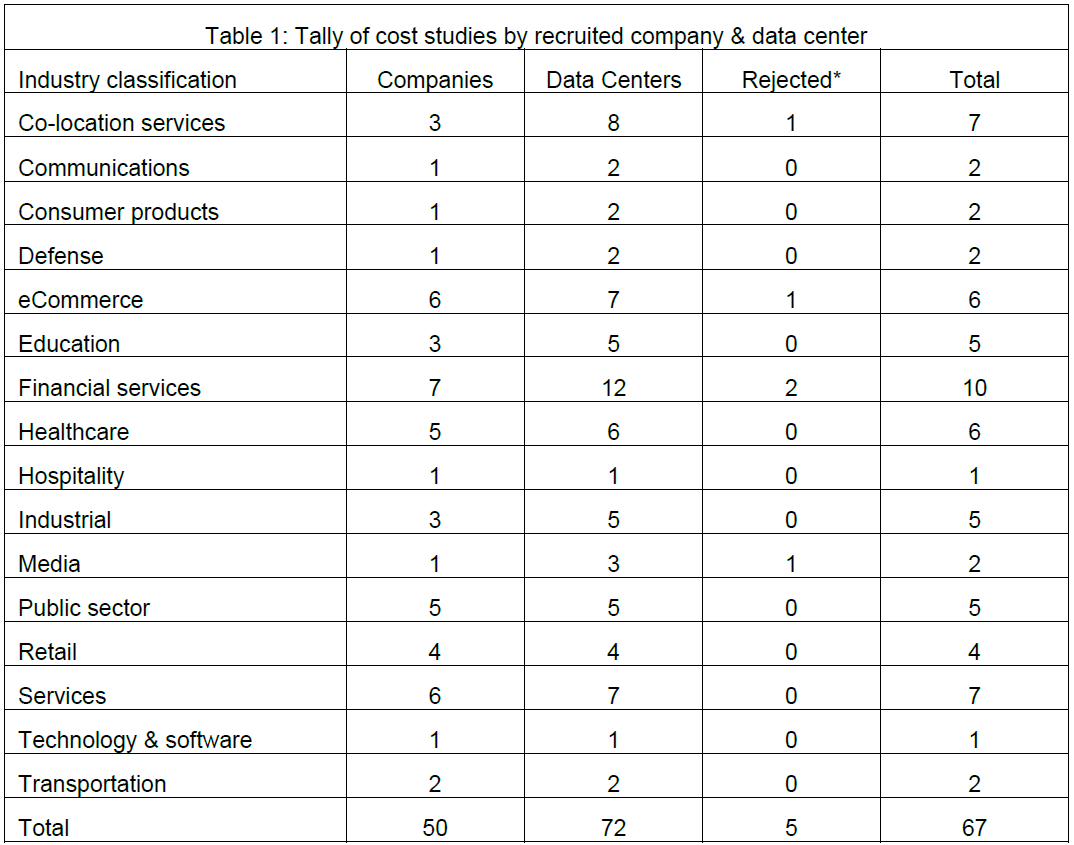

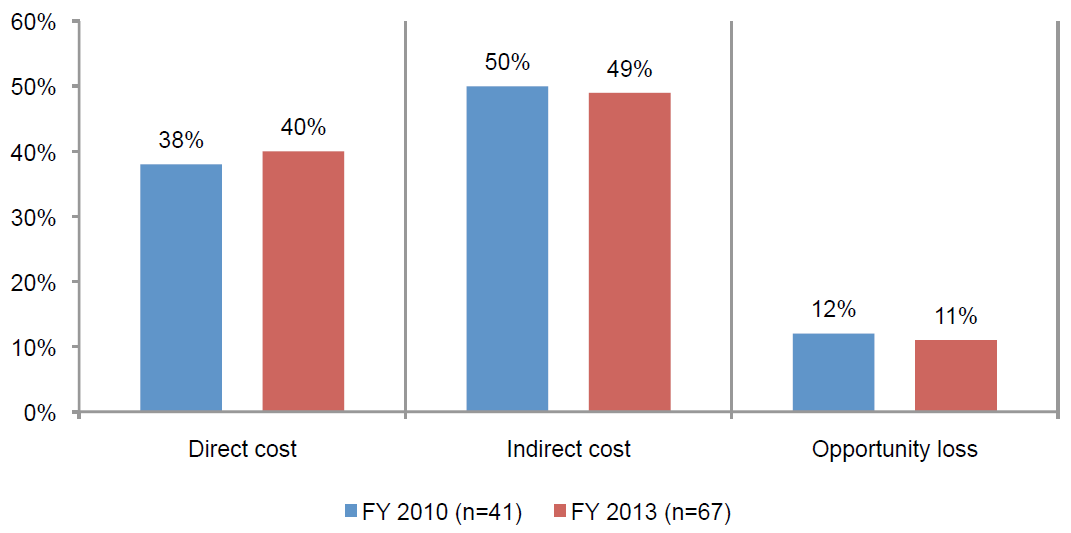

The following table summarizes the frequency of companies and separate data centers participating in the benchmark study. As reported, a total of 16 industries are represented in the sample.

Our final sample includes a total of 50 separate organizations representing 67 data centers – which is the unit of analysis. A total of five organizations were rejected from the final sample for incomplete responses to our survey instrument, thus resulting in a final sample of 67 data centers.

The following table summarizes participating data center size according to total square footage and the duration of both partial and complete unplanned outages. The average size of the data center in this study is 12,558 square feet and the average outage duration is 86 minutes.

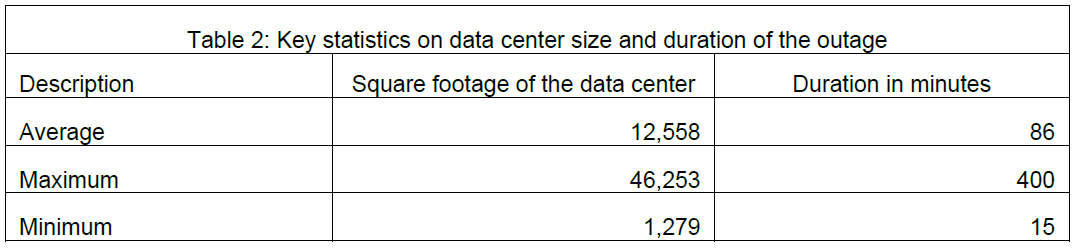

Pie Chart 1 summarizes the sample of participating companies’ data centers according to 16 primary industry classifications. As can be seen, financial services and co-location services are the two largest industry segments representing 15 and 10 percent of the sample, respectively. Financial services companies include retail banking, insurance, brokerage, and investment management companies.

Pie Chart 1: Distribution of participating organizations by industry segment

Computed from 67 benchmarked data centers

Pie Chart 2 reports the percentage frequency of companies based on their geographic location according to six regions in the United States. The northeast represents the largest region (at 21 percent) and the smallest region is the Southwest (at 12 percent).

Pie Chart 2: Distribution of participating organizations by US geographic region

Computed from 67 benchmarked data centers

Part 5. Key Findings

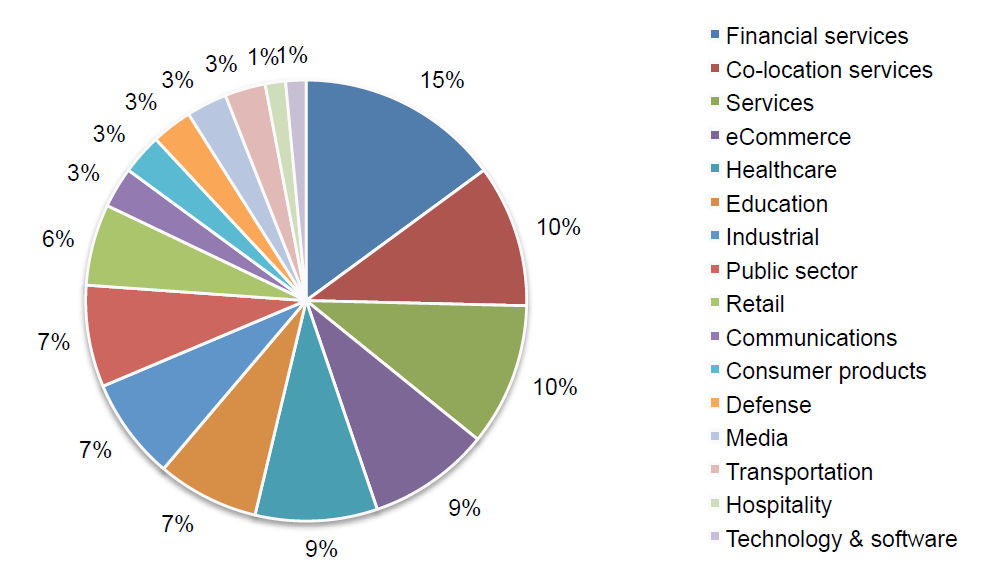

Bar Chart 1 reports the cost structure on a percentage basis for all cost activities for FY 2010 and FY 2013. As shown, the cost mix has remained stable over the past three years. Indirect cost represents about half and opportunity loss represents 12 percent of total cost of outages.

Bar Chart 1: Percentage cost structure of unplanned data center outages

Table 3 summarizes the cost of unplanned outages for all 67 data centers. Please note that cost statistics are derived from the analysis of one unplanned outage incident.

Bar Chart 2 reveals significant variation across nine cost categories for FY 2010 and FY 2013. The cost associated with business disruption, which includes reputation damages and customer churn, represents the most expensive cost category. Least expensive involves the engagement of third parties such as consultants to aid in the resolution of the incident.

Bar Chart 2: Comparison of FY 2010 and FY 2013 activity cost categories

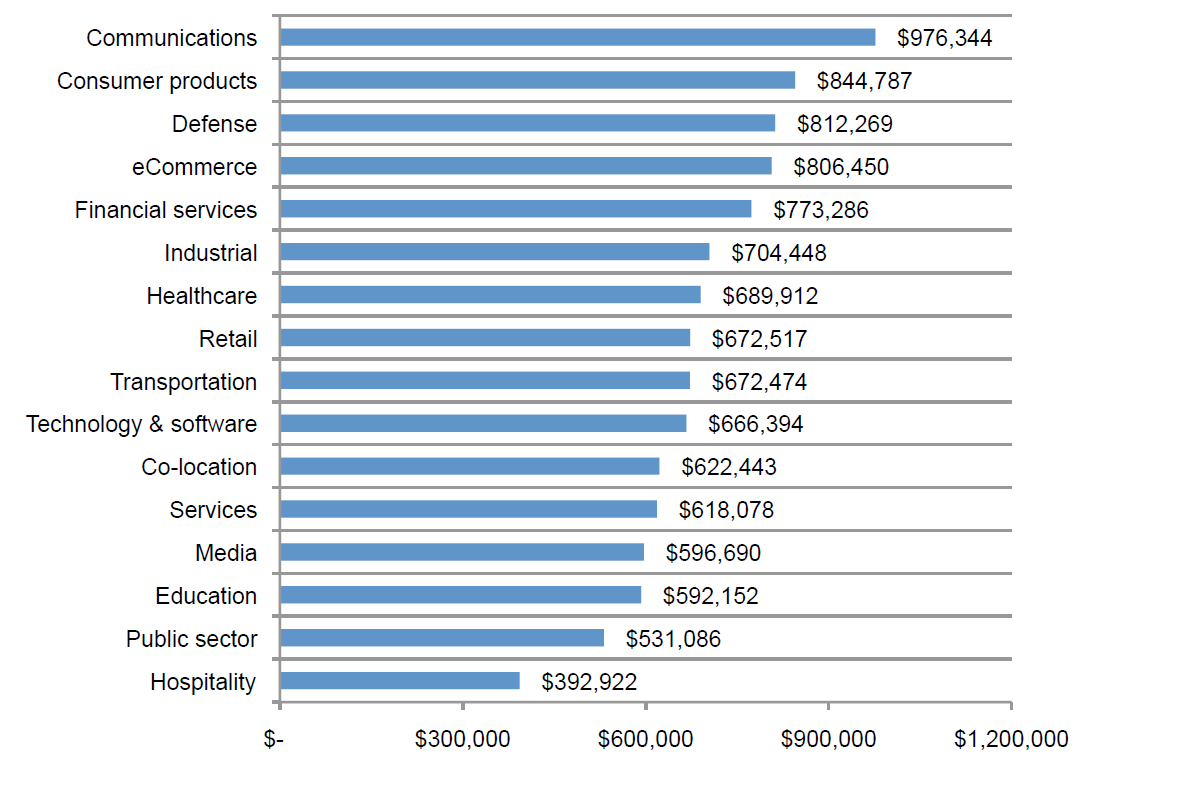

Bar Chart 3 provides the total cost of unplanned outages for 16 industry segments included in our benchmark sample. The analysis by industry is limited because of a small sample size; however, it is interesting to see wide variation across segments ranging from a high of almost $1 million (communications) to a low of almost $400,000 (hospitality).

Bar Chart 3: Distribution of total cost for 15 industry segments

Computed from 67 benchmarked data centers

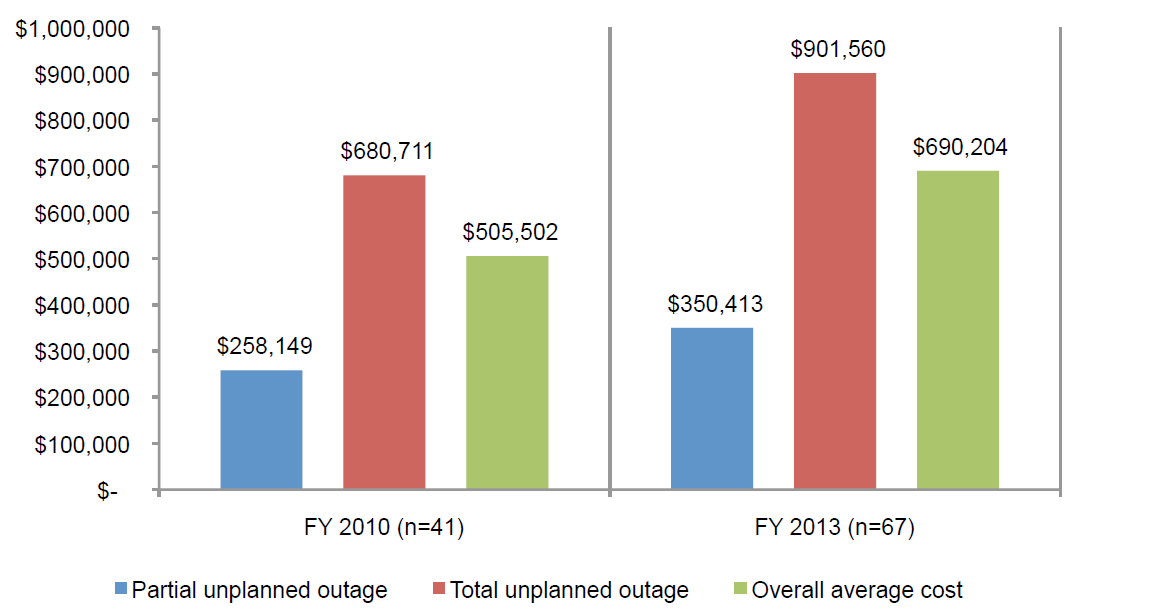

Bar Chart 4 compares costs for partial unplanned outages and complete unplanned outages. Similar to 2010, complete outages are more than twice as expensive as partial outages.

Bar Chart 4: Cost for partial and total shutdown

Comparison of FY 2010 and FY 2013 results

Bar Chart 5 compares the average duration (minutes) of the event for partial and complete outages. In this year’s study, complete unplanned outages, on average, last 63 minutes longer than partial outages.

Bar Chart 5: Length in time (minutes) for partial and total shutdown

Comparison of FY 2010 and FY 2013 results

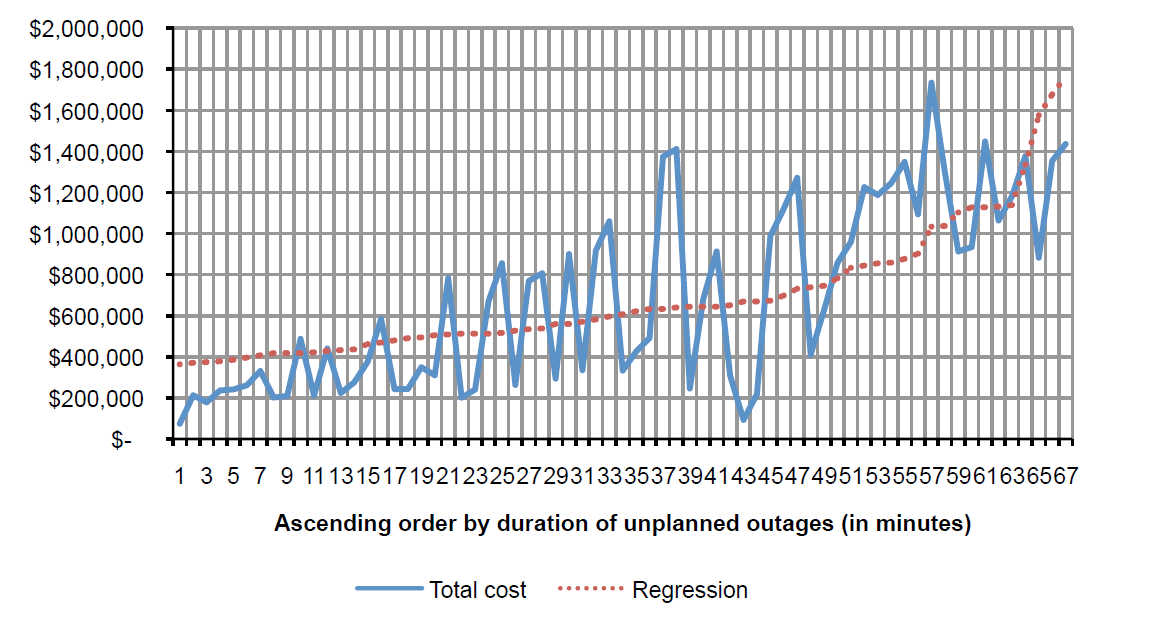

Graph 1 shows the relationship between outage cost and duration of the incident. The graph is organized in descending order by duration of the outage in minutes. Accordingly, observation 1 has the shortest duration and observation 57 has the longest duration. The regression line is derived from the analysis of all 67 data centers. Clearly, these results show that the cost of outage is linearly related to the duration of the outage.

Graph 1: Relationship between cost and duration of unplanned outages

Minutes of down time

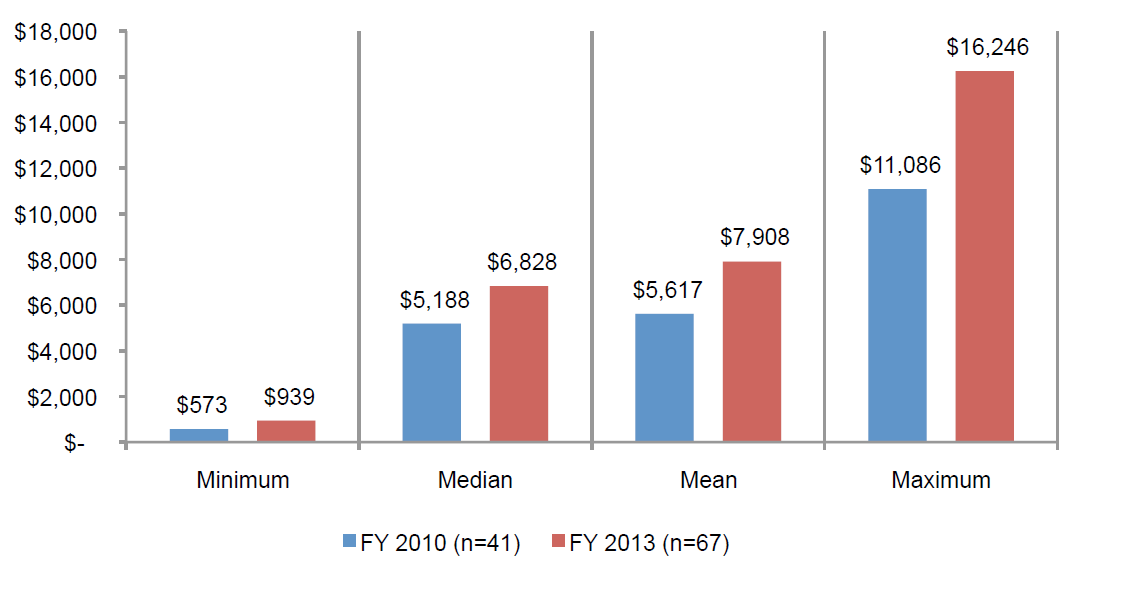

Bar Chart 6 reports the minimum, median, mean and maximum cost per minute of unplanned outages computed from 67 data centers. This chart shows that the most expensive cost of an unplanned outage is over $16,000 per minute. On average, the cost of an unplanned outage per minute is likely to exceed almost $8,000 per incident.

Bar Chart 6: Total cost per minute of an unplanned outage

Comparison of FY 2010 and FY 2013

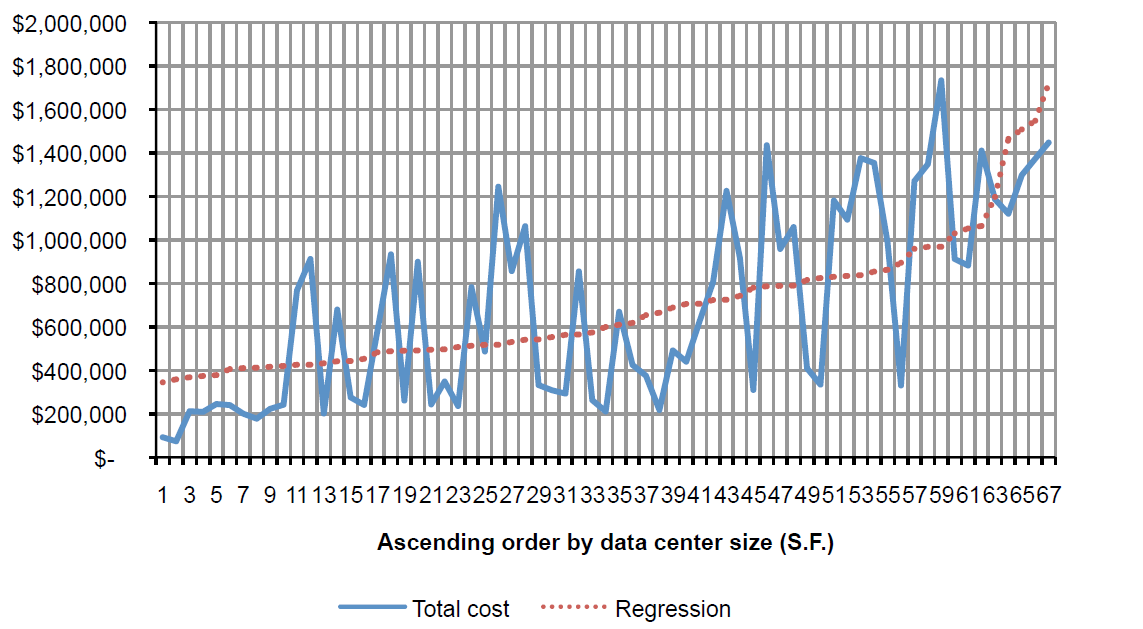

Graph 2 shows the relationship between data center size as measured by square footage and the total cost of unplanned outages. Observation 1 has the smallest and observation 59 has the largest data centers in square footage, respectively. The regression line is computed from the analysis of all 67 data centers. Similar to the duration analysis above, these results show that the cost of outage is linearly related to the size of the data center.

Graph 2: Relationship between cost and data center size (measured in square feet)

Bar Chart 7 reports the mean cost per square foot of unplanned outages based on all 67 data centers according to quartile. This chart shows that the most expensive cost of an unplanned outage is $95 per square foot for the smallest quartile of companies. The lowest average is $45 for larger organizations.

Bar Chart 7: Cost per square foot of data center

Quartile mean S.F. is bracketed value

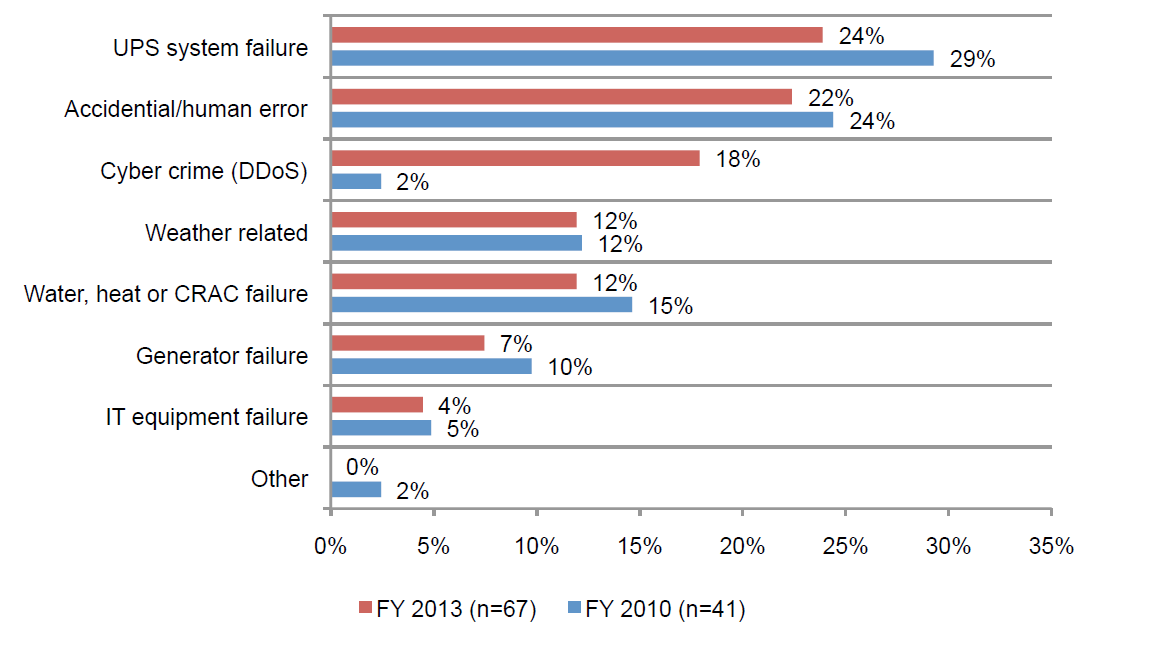

Bar Chart 8 groups the sample of 67 data centers by the primary root cause of the unplanned outage. The “other” category refers to incidents where the root cause could not be determined. As shown, 24 percent of companies rate UPS system failure as the primary root cause of the incident. Twenty-two percent rate accidental or human error and 18 percent as a cyber attack as the primary root cause of the outage. IT equipment failure represents only four percent of all outages studied in this research.

Bar Chart 8: Root causes of unplanned outages

Comparison of FY 2010 and FY 2013 results

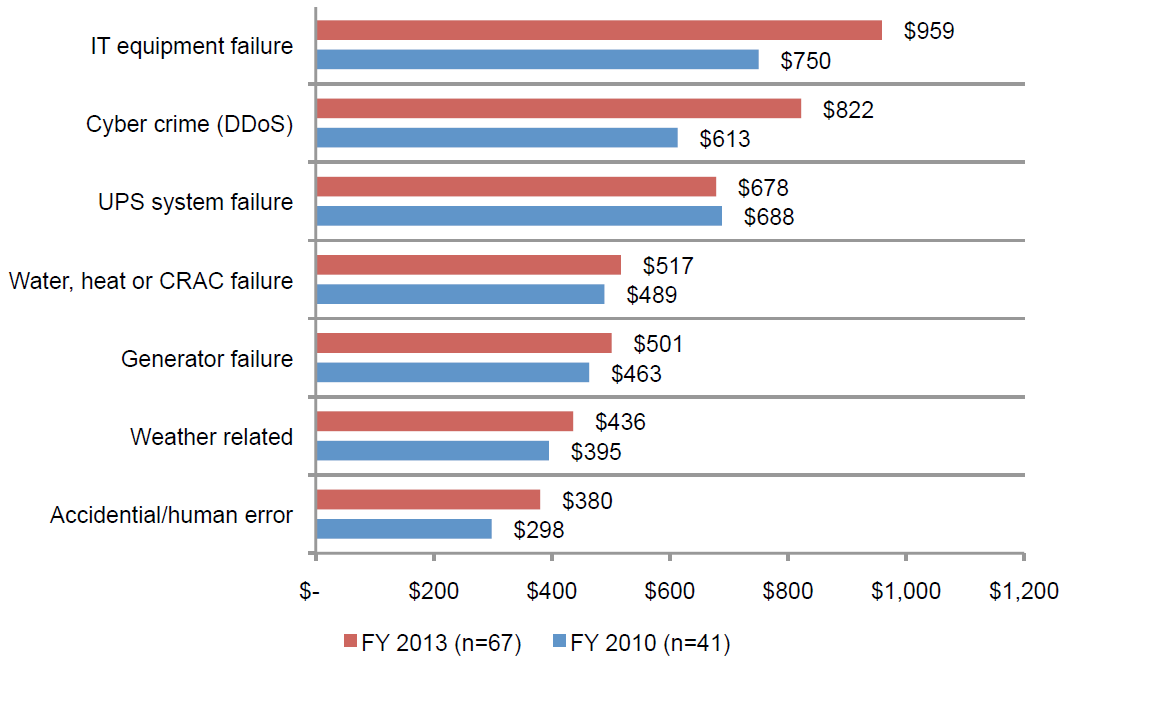

Bar Chart 9 reports the average cost of outage by primary root cause of the incident. As shown below, IT equipment failures result in the highest outage cost, followed by cyber crime. The least expensive root cause appears to be related to accidental/ human errors.

Bar Chart 9: Total cost by primary root causes of unplanned outages

Comparison of FY 2010 and FY 2013 results

$000 omitted

Part 6. Concluding Thoughts

The results of this year’s study clearly reflect shifting attitudes and trends since the original Study on Data Center Outages and Cost of Data Center Outages were released in 2010. The update to the Study on Data Center Outages, released in September 2013, indicated an increase of data center downtime awareness and an elevated sense of urgency surrounding availability, as well as a surge in cyber attacks related to availability. The companion Cost of Data Center Outages study, which analyzed 67 data centers across varying industry segments, indicates the significant increase in the cost of unplanned data center outages since 2010.

The 2010 studies fueled a global discussion on the consequences of data center outages at industry events such as AFCOM Data Center World and in dozens of IT and data center publications. It helped define and reinforce a business case for data center availability and safeguarding uptime, which was not previously a consideration for many business decisionmakers.

Recent high-profile outages also caught the attention of these business leaders. From the Superbowl to Twitter’s Fail Whale to outages of Amazon and Google, major disruptions to IT services around the globe helped bring downtime to the fore and reinforce not only the criticality of availability, but have also emphasized the dramatic financial cost associated with unplanned downtime.

With today’s data centers providing more critical, interdependent devices and IT systems than ever before, the 41 percent increase in cost from 2010 was remarkably higher than expected. The results underscore the importance of minimizing the risk of downtime that can potentially cost thousands of dollars per minute. The expectation that uptime is now a baseline assumption and there is an urgency to deliver it in order to save on costs, reverberates through the findings of the study.

Industries with revenue models dependent on the data center’s availability to deliver IT and networking services to customers – such as telecommunications service providers and ecommerce companies – and those that deal with a large amount of secure data – such as defense contractors and financial institutions – continue to incur the most significant costs associated with downtime. The highest cost of a single event was more than $1.7 million.

Those same industries did see a slight decrease (two-to-five percent) compared to 2010 costs, while those organizations that traditionally have been less dependent on their data centers saw a significant increase. The largest increase was in the hospitality sector, which saw a 129 percent increase; followed by the public sector (116 percent), transportation (108 percent) and media organizations (104 percent).

As there is an increasing need for a growing number of companies and organizations to adapt to a more social, mobile and cloud-based model, the criticality of minimizing the risk of downtime and committing the necessary investments is greater than ever before. This report should serve as a resource for those needing to make more informed business decisions regarding the cost associated with eliminating vulnerabilities and anticipating the costs associated with inaction.

Part 7. Caveats

This study utilizes a confidential and proprietary benchmark method that has been successfully deployed in earlier Ponemon Institute research. However, there are inherent limitations to benchmark research that need to be carefully considered before drawing conclusions from findings.

- Non-statistical results: The purpose of this study is descriptive rather than normative inference. The current study draws upon a representative, non-statistical sample of data centers, all U.S.-based entities experiencing at least one unplanned outage over the past 12 months. Statistical inferences, margins of error and confidence intervals cannot be applied to these data given the nature of our sampling plan.

- Non-response: The current findings are based on a small representative sample of completed case studies. An initial mailing of benchmark surveys was sent to a benchmark group of over 560 organizations, all believed to have experienced one or more outages over the past 12 months. Sixty-seven data centers provided usable benchmark surveys. Nonresponse bias was not tested so it is always possible companies that did not participate are substantially different in terms of the methods used to manage the detection, containment, and recovery process, as well as the underlying costs involved.

- Sampling-frame bias: Because our sampling frame is judgmental, the quality of results is influenced by the degree to which the frame is representative of the population of companies and data centers being studied. It is our belief that the current sampling frame is biased toward companies with more mature data center operations.

- Company-specific information: The benchmark information is sensitive and confidential. Thus, the current instrument does not capture company-identifying information. It also allows individuals to use categorical response variables to disclose demographic information about the company and industry category. Industry classification relies on self-reported results.

- Unmeasured factors: To keep the survey concise and focused, we decided to omit other important variables from our analyses such as leading trends and organizational characteristics. The extent to which omitted variables might explain benchmark results cannot be estimated at this time.

- Extrapolated cost results. The quality of survey research is based on the integrity of confidential responses received from benchmarked organizations. While certain checks and balances can be incorporated into the survey process, there is always the possibility that respondents did not provide truthful responses. In addition, the use of a cost estimation technique (termed shadow costing methods) rather than actual cost data could create significant bias in presented results.

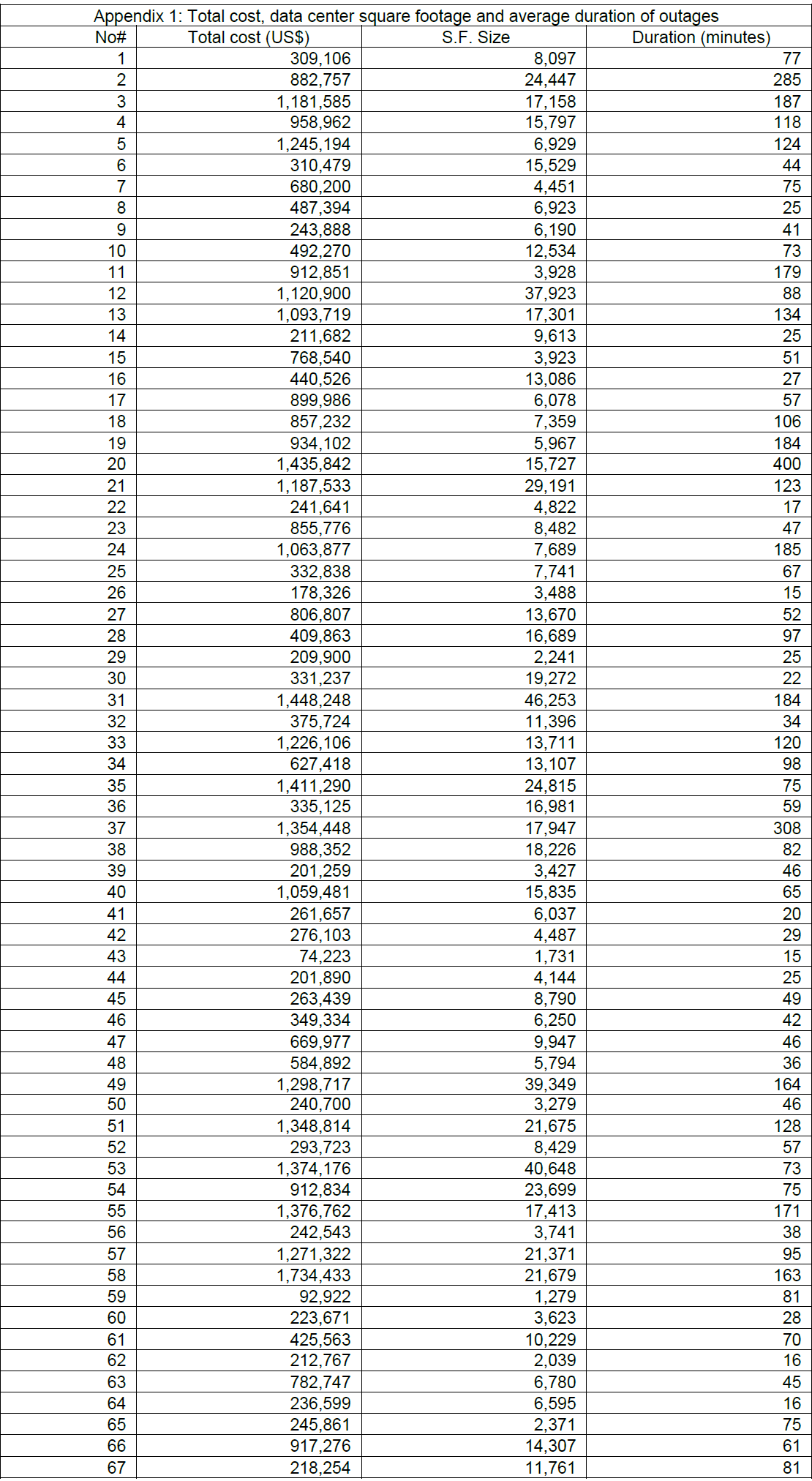

Appendix 1: Summarized data for 67 benchmarked data centers

The following table summarizes the total cost of unplanned outages for 67 data centers. The activity cost column summarizes detection, containment, recovery, and ex-post response costs.

If you have questions or comments about this report, please contact us by letter, phone call or email:

Ponemon Institute LLC

Attn: Research Department, 2308 US 31 North, Traverse City, Michigan 49686 USA

1.800.877.3118

Ponemon Institute

Advancing Responsible Information Management

Ponemon Institute is dedicated to independent research and education that advances responsible information and privacy management practices within business and government. Our mission is to conduct

high quality, empirical studies on critical issues affecting the management and security of sensitive information about people and organizations.

As a member of the Council of American Survey Research Organizations (CASRO), we uphold strict data confidentiality, privacy and ethical research standards. We do not collect any personally identifiable information from individuals (or company identifiable information in our business research). Furthermore, we have strict quality standards to ensure that subjects are not asked extraneous, irrelevant or improper questions.